SK hynix Begins Volume Production of 12-Layer 36GB HBM3E Memory

Increased speed of memory operations to 9.6Gb/s, provides 10% higher heat dissipation performance compared to previous-gen, and secure stability and reliability of product through enhanced warpage controlling

This is a Press Release edited by StorageNewsletter.com on October 8, 2024 at 2:02 pmSummary:

- Plans to supply the highest-performing, highest-capacity 12-layer HBM3E to customers by the end of 2024

- DRAM chips made 40% thinner to increase capacity by 50% at the same thickness as the previous 8-layer product

- The company to continue HBM’s success with outstanding product performance and competitiveness

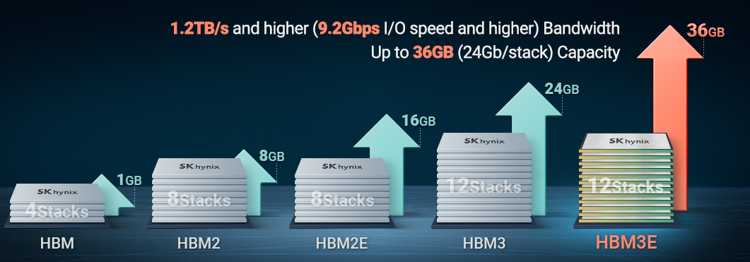

SK hynix Inc. announced that it has begun mass production of the world’s first 12-layer HBM3E product with 36GB [1], the largest capacity of existing HBM [2] to date.

The company plans to supply mass-produced products to customers within the year, proving its overwhelming technology once again six months after delivering the HBM3E 8-layer product to customers for the 1st time in the industry in March this year.

The company plans to supply mass-produced products to customers within the year, proving its overwhelming technology once again six months after delivering the HBM3E 8-layer product to customers for the 1st time in the industry in March this year.

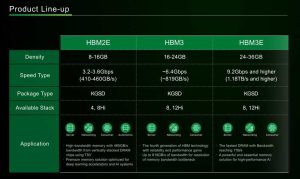

SK hynix is the only company in the world that has developed and supplied the entire HBM lineup from the 1st-gen (HBM1) to the 5th-gen (HBM3E), since releasing the world’s 1st HBM in 2013. The company plans to continue its leadership in the AI memory market, addressing the growing needs of AI companies by being the 1st in the industry to mass-produce the 12-layer HBM3E.

According to the company, the 12-layer HBM3E product meets the world’s highest standards in all areas that are essential for AI memory including speed, capacity and stability. The firm has increased the speed of memory operations to 9.6Gb/s, the highest memory speed available today. If ‘Llama 3 70B’ [3], a Large Language Model (LLM), is driven by a single GPU equipped with 4 HBM3E products, it can read 70 billion total parameters 35x within a second.

The company has increased the capacity by 50% by stacking 12 layers of 3GB DRAM chips at the same thickness as the previous 8-layer product. To achieve this, the company made each DRAM chip 40% thinner than before and stacked vertically using TSV [4] technology.

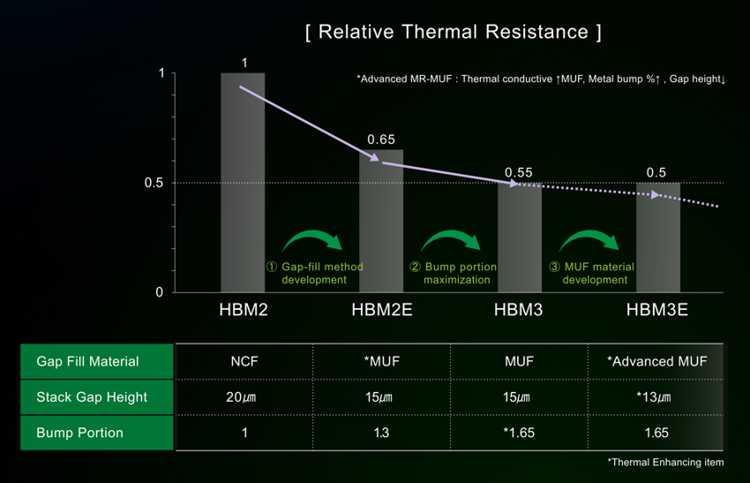

The firm also solved structural issues that arise from stacking thinner chips higher by applying its core technology, the Advanced MR-MUF [5] process. This allows to provide 10% higher heat dissipation performance compared to the previous-gen, and secure the stability and reliability of the product through enhanced warpage controlling.

“SK hynix has once again broken through technological limits demonstrating our industry leadership in AI memory,” said Justin Kim, president (head of AI Infra). “We will continue our position as the No.1 global AI memory provider as we steadily prepare next-generation memory products to overcome the challenges of the AI era.“

Click to enlarge

[1] Previously, the maximum capacity of HBM3E was 24GB from 8 vertically stacked 3GB DRAM chips.

[2] HBM (High Bandwidth Memory): This high-value, high-performance memory vertically interconnects multiple DRAM chips and increases data processing speed in comparison to traditional DRAM products. HBM3E is the extended version of HBM3, the 4th-gen product that succeeds the previous-gen of HBM, HBM2 and HBM2E.

[3] Llama 3: Open-source LLM released by Meta in April 2024, with 3 sizes in total: 8 billion, 70 billion, and 400 billion.

[4] TSV (Through Silicon Via): This advanced packaging technology links upper and lower chips with an electrode that vertically passes through thousands of fine holes on DRAM chips.

[5] MR-MUF (Mass Reflow Molded Underfill): The process of stacking semiconductor chips, injecting liquid protective materials between them to protect the circuit between chips, and hardening them. The process has proved to be more efficient and effective for heat dissipation, compared with the method of laying film-type materials for each chip stack. SK hynix’s advanced MR-MUF technology is critical to securing a stable HBM mass production as it provides good warpage control and reduces the pressure on the chips being stacked.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter