Micron HBM3E 12-High 36GB Delivers Lower Power Consumption than Competitors’ 8-High 24GB Offerings

Despite having 50% more DRAM capacity in package

This is a Press Release edited by StorageNewsletter.com on September 18, 2024 at 2:01 pm This article was written on September 2024 by Raj Narasimhan SVP and GM of Micron Technology, Inc.‘s compute and networking business unit.

This article was written on September 2024 by Raj Narasimhan SVP and GM of Micron Technology, Inc.‘s compute and networking business unit.

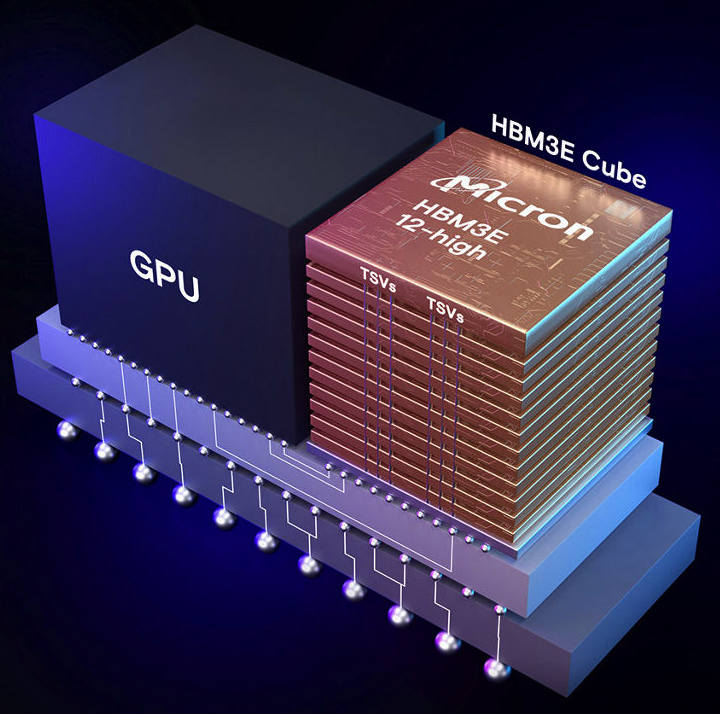

As AI workloads continue to evolve and expand, memory bandwidth and capacity are increasingly critical for system performance. The latest GPUs in the industry need the highest performance high bandwidth memory (HBM), significant memory capacity, as well as improved power efficiency. Micron is at the forefront of memory innovation to meet these needs and is now shipping production-capable HBM3E 12-high to key industry partners for qualification across the AI ecosystem.

HBM3E 12-high 36GB delivers significantly lower power consumption than competitors’ 8-high 24GB offerings, despite having 50% more DRAM capacity in the package

It boasts a 36GB capacity, a 50% increase over current HBM3E 8-high offerings, allowing larger AI models like Llama 2 with 70 billion parameters to run on a single processor. This capacity increase allows faster time to insight by avoiding CPU offload and GPU-GPU communication delays.

HBM3E 12-high 36GB delivers lower power consumption than the competitors’ HBM3E 8-high 24GB solutions. It offers more than 1.2TB/s of memory bandwidth at a pin speed greater than 9.2Gb/s. These combined advantages of Micron HBM3E offer maximum throughput with the lowest power consumption can ensure optimal outcomes for power-hungry data centers.

Additionally, HBM3E 12-high incorporates fully programmable MBIST that can run system representative traffic at full spec speed, providing improved test coverage for expedited validation and enabling faster time to market and enhancing system reliability.

Robust ecosystem support

Micron is shipping production-capable HBM3E 12-high units to key industry partners for qualification across the AI ecosystem. This HBM3E 12-high milestone demonstrates Micron’s innovations to meet the data-intensive demands of the evolving AI infrastructure.

Micron is also a partner in TSMC’s 3DFabric Alliance, which helps shape the future of semiconductor and system innovations. AI system manufacturing is complex, and HBM3E integration requires close collaboration between memory suppliers, customers and outsourced semiconductor assembly and test (OSAT) players.

In a recent exchange, Dan Kochpatcharin, head of the ecosystem and alliance management division at TSMC, commented: “TSMC and Micron have enjoyed a long-term strategic partnership. As part of the OIP ecosystem, we have worked closely to enable Micron’s HBM3E-based system and chip-on-wafer-on-substrate (CoWoS) packaging design to support our customer’s AI innovation.”

In summary, here are the HBM3E 12-high 36GB highlights:

- Undergoing multiple customer qualifications: Micron is shipping production-capable 12-high units to key industry partners to enable qualifications across the AI ecosystem.

- Seamless scalability: With 36GB of capacity (a 50% increase in capacity over current HBM3E offerings), HBM3E 12-high allows data centers to scale their increasing AI workloads seamlessly.

- Efficiency: it delivers lower power consumption than the competitive HBM3E 8-high 24GB solution.

- Superior performance: With pin speed greater than 9.2Gb/s, it delivers more than 1.2TB/s of memory bandwidth, enabling fast data access for AI accelerators, supercomputers and data centers.

- Expedited validation: Fully programmable MBIST capabilities can run at speeds representative of system traffic, providing improved test coverage for expedited validation, enabling faster time to market and enhancing system reliability.

Looking ahead

Micron’s data center memory and storage portfolio is designed to meet the evolving demands of generative AI workloads. From near memory (HBM) and main memory (high-capacity server RDIMMs) to Gen5 PCIe NVMe SSDs and data lake SSDs, the company offers products that scale AI workloads efficiently and effectively.

As it continues to focus on extending its industry leadership, it is already looking toward the future with its HBM4 and HBM4E roadmap. This forward-thinking approach ensures that the firm remains at the forefront of memory and storage development, driving the next wave of advancements in data center technology.

For more information, visit Micron’s HBM3E page.

Subscribe to our free daily newsletter

Subscribe to our free daily newsletter